In a report last spring, Ashley LiBetti Mitchel and I wrote that there’s simply no magic cocktail of teacher preparation program requirements or personal characteristics that will guarantee someone becomes a great teacher.

Since we wrote that report, there’s been even more evidence showing the same thing. I like pictures, so I’m going to pull some key graphics to help illustrate one basic point: There’s really no definitive way to tell who’s going to be a good teacher before they start teaching.

First, there’s a lot of interest in “raising the bar” for the teaching profession. It’s not clear what this means exactly, but at root it implies that if we could somehow just recruit better people to become teachers, then “poof!” we’d have better teachers. “Better” can be defined in multiple ways, but 46 states and the District of Columbia use some form of the Praxis assessments to set a minimum bar for entry into the teaching profession. The “Praxis I” was a set of assessments focused on reading, writing, and mathematics content, and a 1999 analysis found its questions were roughly as difficult as what we ask of most high schoolers. The “Praxis II” encompasses a wide variety of subject-specific tests. The tests have been renamed, but most states still require teachers to pass the successors of the original Praxis tests.

The Praxis tests have been in place for a number of years and have been taken by millions of teachers. Some teacher candidates didn’t score very well on it, so the Praxis tests have kept lots of teachers out of the profession. Does that mean they would have been bad teachers?

Not necessarily. Some low-scoring teachers make it into the teaching profession through waivers, and states have set different passing scores on the Praxis. Researchers have exploited those variations to see whether those minimum passing scores matter.

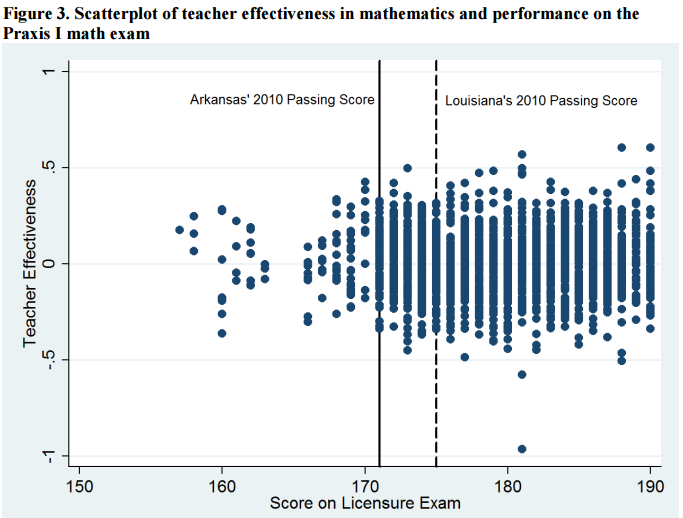

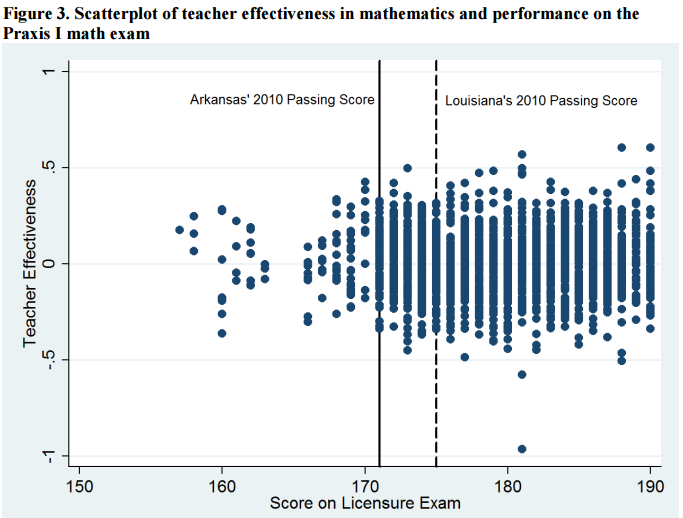

The graph below shows the results of one of those studies, a recent report from James Shuls for the Arkansas Center for Research in Economics. Every dot represents one teacher. The x-axis shows their Praxis I math scores, and the y-axis shows their value-added scores in teaching math in Arkansas schools. Shuls has inserted two vertical bars, one representing Arkansas’ Praxis passing score as of 2010 (the solid bar on the left), and the other representing a higher passing score used by Louisiana.

Should Arkansas “raise the bar” to match Louisiana? That’s not really clear. They’d block some lower-performing teachers from ever becoming teachers, but they’d also lose out on lots of teachers who perform about average or above average. Mostly, they’d just be limiting the supply of new teachers with no real effect on average teacher quality.

It’s hard to tell from the graph, but, from a purely statistical perspective, teachers who perform better on the Praxis math are, on average, better math teachers. But the differences are tiny, and there is wide variation at nearly every score. Some great teachers score poorly on the Praxis, and some poor teachers score well. A few years ago Dan Goldhaber found nearly the same thing when looking at North Carolina Praxis test-takers.

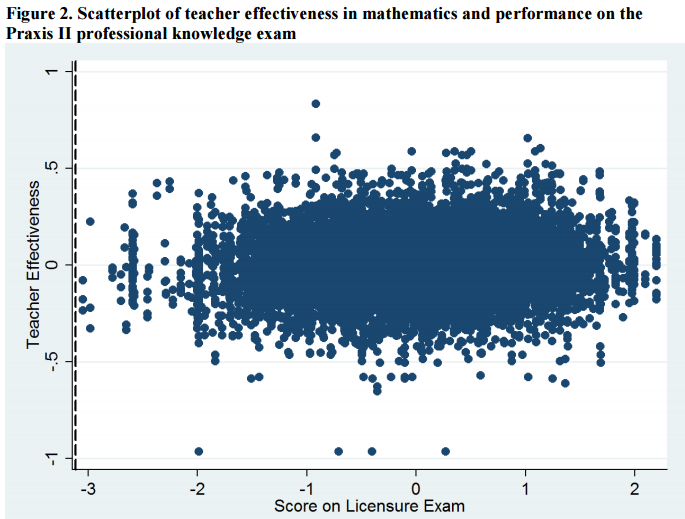

One common response to this sort of argument is that the Praxis I is too low-level to really discern teacher quality. Besides, it’s a generic content test, not a test of a teacher’s content knowledge in her future teaching position or her pedagogical knowledge. The Praxis II was designed to address these concerns. There are multiple Praxis II tests, all pitched to a particular content area or pedagogical skill set. So does the Praxis II do a better job of discerning who’s going to be a great teacher?

Again, the answer is not really. The second graph comes from the same Shuls paper, this time comparing Praxis II results with teacher effectiveness in math. Again, each dot represents one teacher, and again, there’s no discernible pattern. Some teachers who did well on Praxis II are able to help students learn math, while some teachers who scored well on the Praxis II are unable to translate that knowledge to their students.

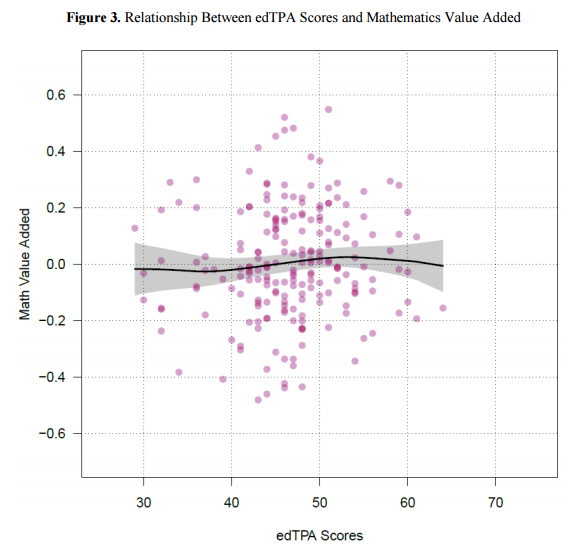

Okay, maybe the problem is the type of test. Rather than a multiple-choice, fill-in-the-blank Praxis test, we can design a different type of teacher assessment that’s more based on performance. The edTPA is one such assessment. It’s portfolio-based; it takes hours and hours to complete; and it combines video of teacher candidates delivering actual lessons, teacher lesson plans, student work, and candidate reflections. Surely this type of holistic assessment can tell us who’s going to be a good teacher and who won’t be?

Again, the answer is yes and no. Prospective teachers who score well on the edTPA tend to out-perform teachers who score poorly. A district hiring two otherwise identical teachers would want to go with the teacher with the higher edTPA score.

But as a policy tool, the edTPA is not much better than Praxis. The graph below, from a recent CALDER paper by Dan Goldhaber, James Cowan, and Roddy Theobald, tells a familiar story. There’s no clear point on the graph where a state would want to set a cut score. Every point higher a state “raises the bar” will mean more teachers screened out of the profession, without any real increase in average teacher quality.

As I’ve written before, we run into this same problem when we look at teacher preparation programs as a whole. In the state of Texas, for example, there are 100 different teacher preparation programs ranging from quick, district-run programs to extensive, traditional preparation programs run by Texas’ best colleges. Still, despite these wide differences in how the programs are run, they produce graduates who are statistically indistinguishable from each other.

Where does this leave us? As Shuls and Matt Barnum have pointed out, and as we wrote in our paper, policymakers have few useful tools to screen out “bad” teachers from the profession. The current screening tools are doing little more than unnecessarily limiting the supply of new teachers. Without better tools at their disposal, states should eliminate unhelpful barriers, stop pretending that “raising the bar” means anything, and let districts take responsibility for training and evaluating their employees.

However, that doesn’t mean we throw up our hands entirely. It just means the locus of control should shift from states to districts. As Cara Jackson and Kirsten Mackler point out, in addition to the Praxis and edTPA, candidate attitudes, qualifications, and practice lessons could all be useful in identifying potential teachers. But what’s useful for a district may not be actionable in policy, because picking the best option between two possible teachers is a different question than whether those teachers deserve to enter the profession at all. There are different standards of evidence in each of those circumstances, and states should stop trying to do the impossible in finding the “right” bar to keep people out of the teaching profession.

August 22, 2016

What Does it Mean to “Raise the Bar” for Entry Into the Teaching Profession?

By Bellwether

Share this article